Abstract

This study introduces a hybrid human-AI workflow to qualitative data analysis within the participatory design of the Writing Analytics Toolkit (WAT), an open-source platform that provides formative feedback on student writing using natural language processing. The toolkit includes a classroom-facing implementation (WAT Classroom; WAT-C), designed to support instruction, and a researcher-facing implementation (WAT Researcher; WAT-R), designed to support analytic and validation workflows. Nine experienced college writing instructors (with 97 cumulative years of teaching) participated in focus group sessions to evaluate an early prototype of the classroom version of WAT (WAT-C), offering formative input on usability, instructional alignment, and feedback clarity. To analyze the resulting qualitative data, we employed a novel AI-augmented analytic process: GPT-4o, integrated within a secure, retrieval-augmented system, to generate inductive codes and preliminary themes from transcripts. These AI-generated outputs were iteratively reviewed, critiqued, refined, and synthesized by researchers, supporting both analytical scalability and interpretive rigor. This human-AI partnership enabled efficient thematic exploration while preserving methodological transparency and researcher judgment. Findings from both qualitative and complementary survey data identified four key design priorities: (1) clearer, more concise feedback, (2) increased instructor customization, (3) reduced administrative burden, and (4) a simplified user interface. These insights directly informed subsequent revisions to WAT-C, including a redesigned feedback interface, customizable metric targets, learning management system integration, and a more intuitive layout. This work illustrates how large language models (LLMs) can support inductive qualitative analysis within participatory design workflows. Moreover, results demonstrate how this workflow can inform iterative educational technology development. Implications include the need to ensure ethical oversight, researcher-led interpretation, and alignment with instructional priorities when incorporating AI into the design of educational technologies.

Keywords

Introduction

Automated writing evaluation (AWE) and writing analytics tools offer scalable support for both teaching and learning of writing by providing students with immediate, formative feedback to guide revision (Potter et al., 2025; Strobl et al., 2019; Wilson & MacArthur, 2024). However, developing effective AWE tools requires user-centered design to ensure alignment with instructional needs (Tuhkala, 2021). These design processes are labor-intensive and difficult to scale (Abras et al., 2004), but recent advances in large language models (LLMs) present new opportunities to reduce the time and effort involved in analyzing qualitative user feedback. We posit that mindful integration of LLMs may potentially streamline critical participatory design processes in AWE tools (McNamara & Potter, 2024).

This paper focuses on how LLMs can be used to support qualitative data analysis during participatory design (Muller & Kuhn, 1993; Ten Holter, 2022; Wacnik et al., 2025). Our study is situated within the broader development of the Writing Analytics Toolkit (WAT), an open, research-driven platform that provides writing analytics for students, teachers, and researchers, designed in collaboration with writing instructors and researchers. WAT comprises two complementary implementations: WAT Classroom (WAT-C), which supports instructional use by students and teachers, and WAT Researcher (WAT-R), which supports post hoc linguistic analysis of writing corpora. In this paper, we focus on WAT Classroom (WAT-C), the classroom-facing implementation of the toolkit.

The paper makes two interrelated contributions. The primary contribution is methodological; it introduces a hybrid AI-human workflow for inductive thematic analysis that leverages LLMs to assist in qualitative coding and theme generation. The second, applied contribution demonstrates how this workflow can be used to inform the iterative redesign of WAT-C through analysis of instructor feedback. To contextualize this work, the current phase builds on a prior participatory design study that established WAT-C’s core interface, functionality, and descriptive writing metrics (Li et al., 2022). In this phase, instructors participated in two focus group sessions to evaluate and refine these metrics, interpret their instructional meaning, and improve how feedback is presented and integrated into instruction.

To analyze instructor insights, we employed a mixed-methods design that combined LLM-assisted qualitative analysis with human coding and review. Focus group transcripts were uploaded to a secure analysis platform, where the ChatGPT-4o model (OpenAI, 2024) was guided through a structured prompt framework to identify preliminary themes. These outputs were then verified, refined, and supplemented by human researchers to enhance the trustworthiness and interpretive accuracy of the findings. This hybrid AI-human workflow enabled a systematic and efficient analysis of instructor feedback, informing the redesign of WAT-C to better meet instructional needs. In doing so, the study demonstrates how LLM-supported workflows can enhance participatory design processes while maintaining methodological rigor and improving the efficiency of qualitative analysis. While much of the AI research in education has centered on detection (e.g., identifying student characteristics, predicting outcomes, or distinguishing human- from AI-generated text), this study illustrates a different use of AI that supports qualitative interpretation and collaborative design. By employing LLMs to assist researchers and educators in making sense of complex feedback data, the work extends AI’s role in education beyond detection toward participatory, interpretive, and human-centered applications.

Related Work

AWE and writing analytics tools are systems designed to provide students and teachers with immediate, formative feedback on their writing, offering scalable opportunities for revision and practice (McNamara & Kendeou, 2022; Potter et al., 2025; Shermis & Wilson, 2024). A number of AWE systems have been developed to support writing improvement, offering students formative feedback on structure, cohesion, and other analytical traits (Butterfuss et al., 2022; Correnti et al., 2022; Link et al., 2014; Knight et al., 2017, 2020). These tools have shown promise for improving writing outcomes and writing self-efficacy, particularly when paired with classroom instruction (Dikli & Bleyle, 2014; Fleckenstein et al., 2023; Liu et al., 2017; Palermo & Thomson, 2018; Wilson & Roscoe, 2020).

Despite their growing presence in writing instruction, AWE systems vary considerably in the extent to which they are adopted and sustained in educational contexts (Potter & Wilson, 2022). Prior work indicates that students’ perceptions of AWE significantly predict their future intentions to continue using or recommending the tool, even if such perceptions have minimal impact on their immediate revision behaviors (Roscoe et al., 2017). Students have also expressed dissatisfaction with the personalization, fairness, or depth of the feedback provided by AWE systems, which can affect their engagement and, in turn, the tool’s instructional effectiveness (Wilson, Delgado, et al., 2024). Similarly, teacher perceptions and contextual factors, such as curriculum alignment, time constraints, and availability of training, can serve as barriers to implementation fidelity and instructional effectiveness (Wilson, Zhang, et al., 2024). Writing instructors have raised concerns about the pedagogical misalignment of systems that rely on predictive scoring instead of providing descriptive, actionable feedback (Li et al., 2022). In addition to these instructional and implementation concerns, AWE systems are susceptible to design biases that can reinforce inequities and standard language ideologies (Goldshtein, Alhashim, & Roscoe, 2024; Goldshtein, Ocumpaugh, et al., 2024). The proprietary nature of most AWE systems also limits transparency, adaptability, and access for educators and researchers (McNamara & Potter, 2024; Strobl et al., 2019).

A range of studies have applied participatory design methods, a form of user-centered design that emphasizes collaboration with end users throughout the development process (Muller & Kuhn, 1993). For example, such methods have been used to identify meaningful writing process indicators for feedback with different stakeholders (Conijn et al., 2022), integrate teacher-centered design into intelligent tutoring systems (Stone et al., 2018), and co-develop feedback platforms that combine automated and peer review to support English language learners (Liaqat et al., 2021). Collectively, these studies underscore the importance of developing writing analytics tools in close collaboration with end users to ensure the tools are usable, interpretable, and aligned with authentic educational needs.

Although user-centered design is critical for developing effective and pedagogically aligned writing technologies, it is also time-consuming and labor-intensive, particularly when it comes to analyzing qualitative user data (Abras et al., 2004; Roscoe et al., 2018). Focus group transcripts, open-ended surveys, and interviews provide rich insight into user needs and instructional contexts, but interpreting these data requires significant human effort and expertise. In addition to the volume of qualitative data, the process of codesign introduces additional complexities, as designers must navigate the creative collaboration of diverse stakeholders while balancing the tension between understanding existing practices and envisioning future ones (Steen, 2011). As a potential approach to these challenges, LLMs may help reduce the labor demands of user-centered development, and in this study, we examine their use specifically for qualitative data analysis, a promising application alongside others such as crowdsourced feedback and iterative prototyping (McNamara & Potter, 2024).

GenAI tools, particularly LLMs, have recently been evaluated as a tool to support qualitative data analysis (Khalid & Witmer, 2025; Zambrano et al., 2023). LLMs are neural networks trained on massive text corpora for language processing and generation, and they form the foundation of many generative artificial intelligence (GenAI) tools (see Minaee et al., 2024, for a review). Initial applications of LLMs for qualitative data analysis have primarily focused on deductive coding, in which the model applies predefined codebooks to structured textual data (Chew et al., 2023; Kirsten et al., 2024; Xiao et al., 2023). However, a growing body of work has begun to explore their use in inductive coding, where codes are generated directly from the data (Bijker et al., 2024; Chen et al., 2024; Khalid & Witmer, 2025; Katz et al., 2024; Prescott et al., 2024; Theelen et al., 2024; Turobov et al., 2024; Zhang, Wu, Xie, Kim, & Carroll, 2023; Zhang, Wu, Xie, Lyu, et al., 2023; Zhang et al. 2024; Zhao et al., 2024). Inductive analysis differs from deductive approaches in that researchers generate codes and themes directly from the data, allowing patterns, meanings, and concepts to emerge organically through iterative interpretation (see Saldaña, 2014; Thomas, 2006). As a result, inductive analysis is typically more complex and interpretive than deductive coding. Interestingly, some studies suggest that LLMs may appear more effective in inductive tasks, not because they outperform human coders, but because the lack of a predefined codebook makes it harder to identify clear errors, particularly when models generate plausible yet superficial themes (Bijker et al., 2024; Chen et al., 2024).

Nevertheless, studies have shown that when guided by structured prompts, LLMs can support inductive coding processes that yield themes aligned with those generated by human researchers, and that they can also reduce the time required for analysis (Bijker et al., 2024; Theelen et al., 2024; Prescott et al., 2024; Zhao et al., 2024). Their effectiveness, however, depends on several factors, including the choice of tools, prompt design, and the degree of human involvement throughout the workflow. Prior studies indicate better performance in inductive coding when LLMs are compared to machine learning and natural language processing approaches (Chen et al., 2024), and that newer models are more reliable with human coders than earlier models (Kirsten et al., 2024).

Beyond model selection, prompt engineering has emerged as a critical factor in performance. Across multiple studies, prompts were found to perform best when they met three key criteria: (1) specifying the model’s role (e.g., “You are a qualitative research expert”), (2) defining the expected structure and format of the input and output (e.g., datasets, themes, justification), and (3) incorporating reasoning-based strategies such as rationale generation, exemplars, or chain-of-thought prompting to mirror human interpretive processes (Wei et al., 2022; Zhang, Wu, Xie, Kim, & Carroll, 2023; Zhang, Wu, Xie, Lyu, et al., 2023; Zhang et al. 2024). For instance, researchers have improved model outputs in qualitative data analysis by presenting multiple rounds of example annotations, guiding the model to revise or refine its coding decisions in response to human feedback, or prompting it to generate justifications alongside each label (Chew et al., 2023; Dunivin, 2024; Zhao et al., 2024). These prompt engineering practices enhance transparency, interpretability, and researcher control over analytic content and meaning-making, making LLM outputs easier to audit, refine, and integrate into the development of qualitative findings. Informed by these insights, our study adopts a structured prompt framework that implements several of these strategies (see Method).

In addition to prompt design, recent studies have also examined methodological enhancements to improve LLM performance through integrated, human-in-the-loop workflows. For instance, during codebook co-development, models are prompted iteratively with human-reviewed examples to refine category definitions and clarify boundary cases (Katz et al., 2024; Khalid & Witmer, 2025; Zhao et al., 2024). Notably, the strongest performance in terms of model accuracy and reliability in inductive analyses has been observed in hybrid workflows where human researchers guide early iterations, review LLM-generated codes, and incorporate contextual knowledge to calibrate and revise model outputs (Chen et al., 2024; Theelen et al., 2024; Zhang, Wu, Xie, Lyu, et al., 2023). These findings underscore that LLMs are most effective when embedded within structured, iterative analytic processes that leverage human expertise at key points in the workflow. Even when assisted by AI, it is the researchers who ultimately shape, interpret, and take responsibility for the analysis (Hayes, 2025).

Despite these promising developments, recent findings have also underscored important limitations in LLM-assisted inductive coding. Although LLMs can generate outputs that resemble and are reliable with researcher-led qualitative data analysis (e.g., crafting themes, summaries, and codes), they do not think, interpret, or reason as human experts do (e.g., Hayes, 2025). Their outputs are shaped by patterns in their training data, which embed the biases of those data (e.g., Warr & Heath, 2025). These challenges are especially salient in qualitative research, where the analysis centers on understanding participants’ social contexts and meaning-making processes, and where researcher reflexivity and positionality play a critical role in shaping interpretation (e.g., Aspers & Corte, 2019; Fossey et al., 2002; Corlett & Mavin, 2018). In inductive qualitative data analysis, LLM outputs may overlook subtle meanings, produce overly broad or redundant codes, or generate hallucinated interpretations that misrepresent or fabricate textual data (Chen et al., 2024; Turobov et al., 2024; Zhang et al., 2024). While structured prompts and hybrid workflows can help reduce these risks, the judgment of trained researchers remains critical to guiding analysis, interpreting ambiguity, and ensuring that codes align with the goals of a study. Even when LLMs perform well, they lack expertise and lived experience to interpret complex social meaning (Imundo et al., 2024). Qualitative researchers are not neutral instruments; their identity, perspective, and relationship to the research context shape the insights that emerge from the data (Bourke, 2014). Consequently, there is growing consensus that LLMs should not be viewed as replacements for human qualitative researchers, but rather as tools that can augment human insight and support scalable, rigorous analysis under researcher supervision (Bijker et al., 2024; Hayes, 2025; Katz et al., 2024). Thus, maintaining a human-centered orientation is essential for using LLMs in qualitative research.

Present Study

The present study responds to calls for best practices in LLM–assisted qualitative analysis by pursuing a dual aim: (1) to test a hybrid AI-human workflow for qualitative data analysis and to refine the alpha version of WAT-C through participatory design with writing instructors, and (2) to examine how a hybrid AI-human workflow can support, rather than replace, qualitative data analysis within the design process. Using a mixed methods approach, qualitative analysis of focus group transcripts was integrated with quantitative survey results to synthesize writing instructors’ feedback and design recommendations for the next iteration of WAT-C. In this context, the study explores how LLMs can support qualitative analysis without replacing the interpretive expertise of human researchers. Specifically, the analysis examined how writing instructors interpreted the usability and instructional relevance of WAT-C metrics and affordances, and identified their design contributions and priorities for improving the tool. The study also evaluates how LLMs can be integrated into a participatory design workflow to support, rather than replace, human-led qualitative analysis.

Method

Research Design

This study employed a participatory design research framework. Participatory design emphasizes the active involvement of users in the development and evaluation of tools and systems (Muller & Kuhn &, 1993; Wacnik et al., 2025). This approach has been leveraged in educational contexts to engage teachers in developing educational technologies, including writing analytics tools (Conijn et al., 2022; Cumbo & Selwyn, 2022; Tuhkala, 2021). As Wacnik et al. (2025) notes in a recent systematic review on participatory design, the method encompasses a broad range of user-centered design practices and is not defined by a singular methodology. Instead, participatory design varies across projects in its timing, techniques, and the degree of stakeholder involvement. Following Wacnik et al.’s (2025) framework, this study engaged in a participatory design process characterized by several key leverage points that influence design equity. First, the process fell between emergent and predetermined: while the interface, writing metrics, and feedback types were initially designed by the research team, instructors provided feedback to revise and reshape how these elements were defined and presented. Second, stakeholder participation was direct, with instructors actively contributing to design discussions (e.g., input on interface changes and metric names and their interpretations). Third, the timing of participation occurred at a mid-stage in development, building on an earlier phase in which different writing teacher participants contributed to the creation of WAT-C’s initial interface and metric framework (Li et al., 2022), but preceding classroom implementation. Fourth, instructor engagement consisted of two focus group sessions within a single design phase without re-engagement in later stages, constituting a one-time participatory process rather than iterative involvement across multiple phases (Wacnik et al., 2025). Although participation in this phase was limited due to time constraints, it contributed to a broader iterative process spanning multiple stages of design (Li et al., 2022). Finally, we used multiple participatory techniques, including structured focus groups and prototype evaluation, to capture instructor insights and guide tool refinement. To analyze data collected through this participatory design process, we employed a convergent mixed-methods design for data analysis, in which qualitative and quantitative data were collected concurrently, analyzed independently, and integrated for interpretation (Creswell & Clark, 2017). This design prioritized qualitative data (QUAL + quan) to center participants’ voices, and quantitative and qualitative survey data were used to triangulate qualitative themes (Fielding, 2012; Toledo & Shannon-Baker, 2023).

Following initial open coding and theme development from focus group transcripts, quantitative survey results and open-ended responses were analyzed in parallel to examine patterns in instructors’ perceptions of WAT-C’s usefulness, usability, interpretability, and behavioral intention. Integration of the two strands occurred during the interpretation and reporting phases, consistent with convergent mixed-methods procedures (Creswell & Clark, 2017). Specifically, qualitative themes were compared with and contextualized by quantitative and open-ended comments, and then combined evidence was synthesized in a joint display to illustrate areas of convergence and divergence across data sources.

Participants

Writing instructors (n = 9) were recruited at a large public university in the southwestern United States to represent the potential user group (see Table 1). Each participant taught entry-level English writing courses. The participants' teaching experience varied, ranging from 1 to over 20 years, with an average of 9.4 years of college-level writing instruction. All participants provided informed consent to participate following IRB-approved guidelines and were awarded a stipend upon completion of their participation.

Name | Title | Courses Taught | Years Teaching College | Typical Class Size | Technology Used for Writing Feedback |

|---|---|---|---|---|---|

Ava | Instructor | Introductory composition courses | 24 | 24 | None |

Anna | Assistant Teaching Professor | Introductory and upper-level composition courses | 20 | 24 | None |

Marcus | Graduate Teaching Assistant | Introductory composition | 13 | 20 | None |

Tara | Graduate Teaching Assistant | Introductory composition | 12 | 20 | TurnItIn |

Seth | Instructor | Introductory and intermediate composition courses | 12 | 24 | ChatGPT (student-guided use) |

Jason | Instructor | Introductory composition | 7 | ~20 | TurnItIn |

Gavin | Instructor of record | Composition for multilingual students | 6 | 20–25 | Grammarly, ChatGPT, Gemini, WordTune |

Autumn | Graduate Teaching Assistant | Introductory composition | 2 | 25 | None |

Kayla | Graduate Teaching Assistant | Introductory composition | 1 | 20–24 | ChatGPT (used in all rounds of revision) |

Note. Participant names are pseudonyms.

Materials

Writing Analytics Tool

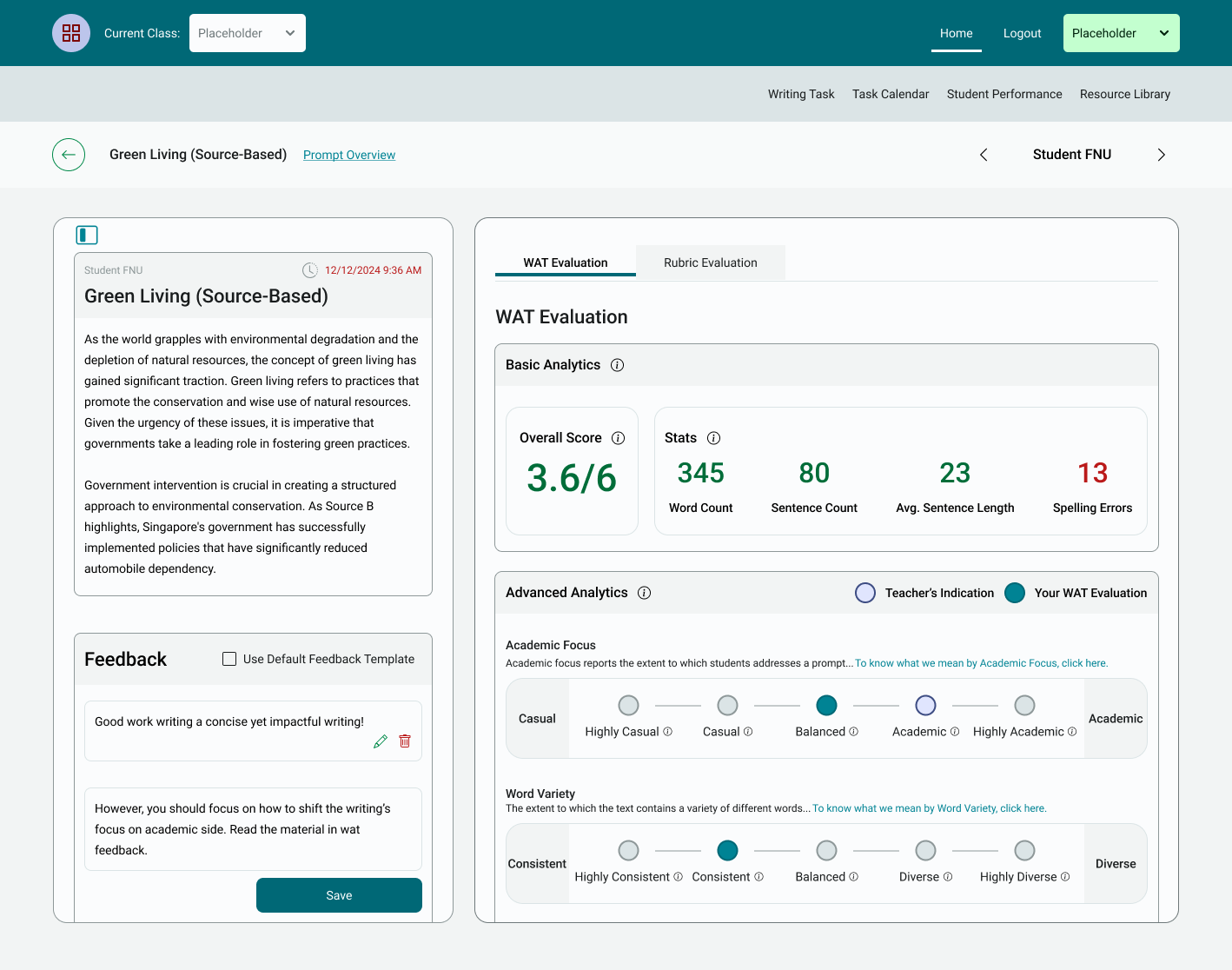

Participants engaged with the alpha version of WAT-C, a web-based platform intended to provide students with formative feedback and support instructors in managing and evaluating writing assignments. Key features were evaluated in terms of usability (how easy the system is to navigate), usefulness (how helpful the features are for instruction), interpretability (how clearly the feedback is presented), and pedagogical alignment (how well the system supports instructors' instructional goals). Developed through prior participatory design research (see Li et al., 2022), the version of WAT-C used in this study was revised to shift emphasis away from predictive scoring and toward descriptive feedback aligned with classroom practice. Instructors in an earlier design cycle expressed a preference for maintaining their role as the primary evaluator of writing quality and favored analytics that could support revision and pedagogical decision-making.

Recent work in AWE and writing analytics highlights the need for AI-based writing tools to move beyond detection and scoring toward feedback that is pedagogically meaningful and adaptable to classroom contexts. For example, eRevise (Correnti et al., 2024; Wang et al., 2020) is an AWE system that exemplifies this shift through the inclusion of revision instruction, making feedback more formative and student-centered. Other studies stress aligning automated feedback with learning design and disciplinary writing practices through instructor co-design (e.g., Knight et al., 2020). Research on implementation further shows that AWE effectiveness depends on teacher and student engagement as much as technical performance (Huang et al., 2025). Building on this pedagogical direction, WAT-C prioritizes teacher agency, customization, and descriptive feedback to enhance interpretability and instructional integration.

As such, the system generates analytics in three categories for persuasive and source-based essays: basic analytics (e.g., word count, sentence length), a holistic overall score (1–6 scale), and advanced analytics. WAT-C also produces a holistic quality score (1-4 scale) for summaries. The advanced analytics were developed using natural language processing (NLP) tools and principal component analysis on a large dataset of student essays. These metrics reflect dimensions of writing such as lexical sophistication, sentence cohesion, and idea development. Table 2 presents the set of advanced metrics included in the alpha version of WAT-C, along with brief descriptions used in the interface.

Advanced Metric Name | Brief Definition |

|---|---|

Sophisticated Wording¹,² | The extent to which more advanced, or less commonly found words are used. |

Development of Ideas¹,² | The extent to which ideas in an essay are developed and elaborated throughout an essay. |

Word Variety¹ | The extent to which the text contains a variety of different words. |

Information Density¹ | The extent to which an essay contains sentences with dense and precise information, which emphasize complex noun phrases and sophisticated and specific function words. |

Word Concreteness¹,² | The degree to which the text contains words that are tangible and refer to concepts that can be experienced by the senses, on average. |

Conventional Language¹,² | The extent to which the text contains words and phrases using grammar constructions mirrored in published texts. |

Sentence Cohesion¹,² | The degree to which there is overlap between words across sentences. |

Academic Focus¹ | The extent to which the text addresses a prompt and uses academic wording. |

Conversational Writing Style¹,² | The extent to which the text is written in an informal conversational style, emphasizing personal opinions, anecdotes, and complex sentences. |

Language Variety² | The extent to which the text contains a variety of different words and sentence structures. |

Academic Writing² | The extent to which sophisticated vocabulary and complex sentence structures are used. |

Source Integration² | The extent to which the text contains overlap of words from the source text(s). |

Varied Sentence Structure² | The degree to which an essay includes a diversity of different sentence structures. |

Note. ¹ Indicates metric is used for persuasive essays, and ² Indicates metric is used for source-based essays

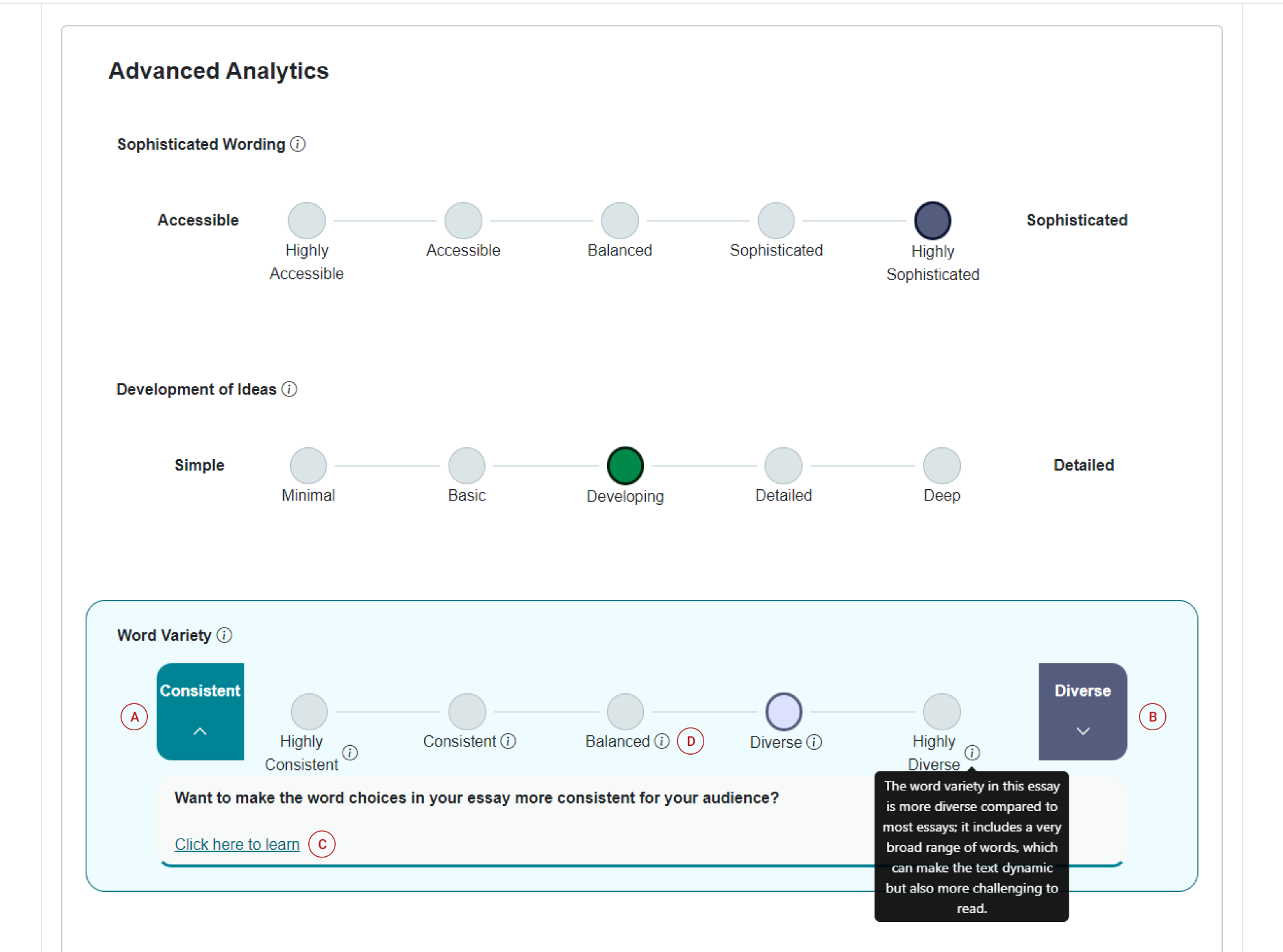

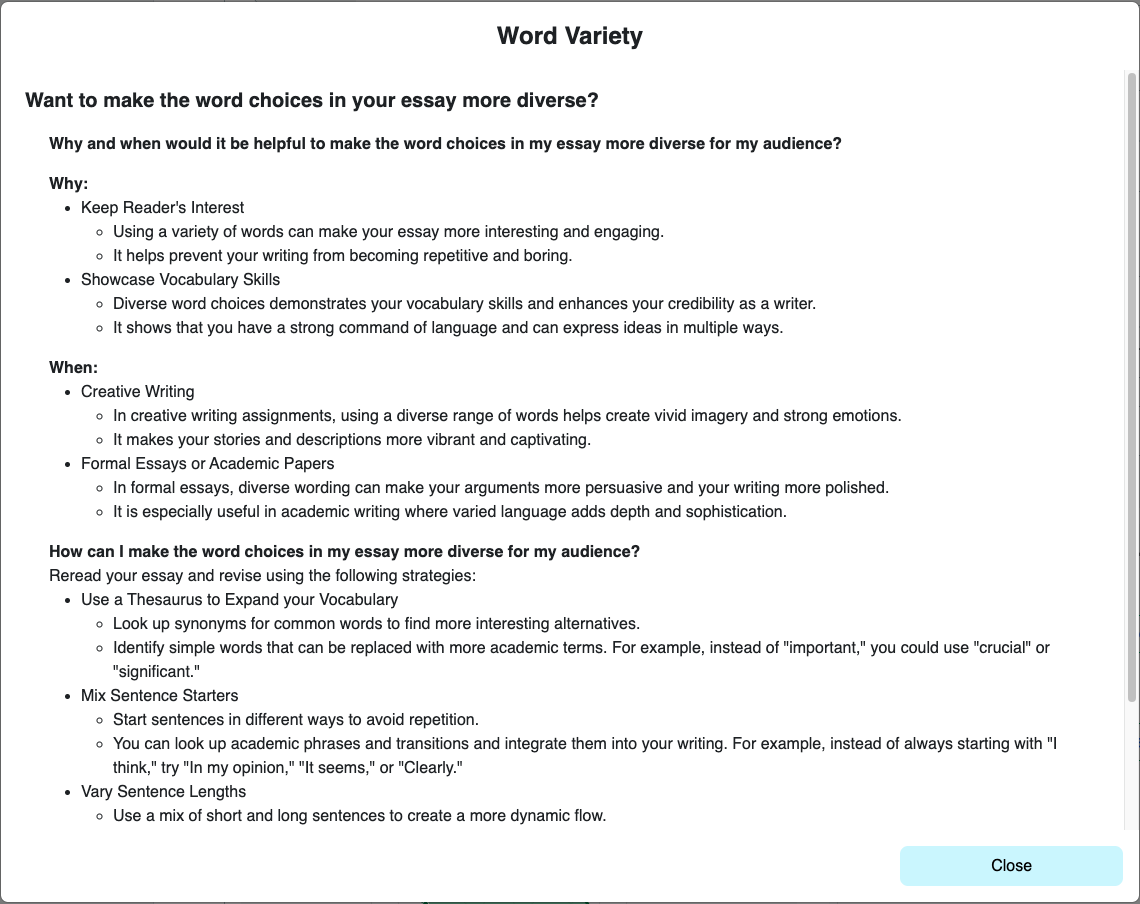

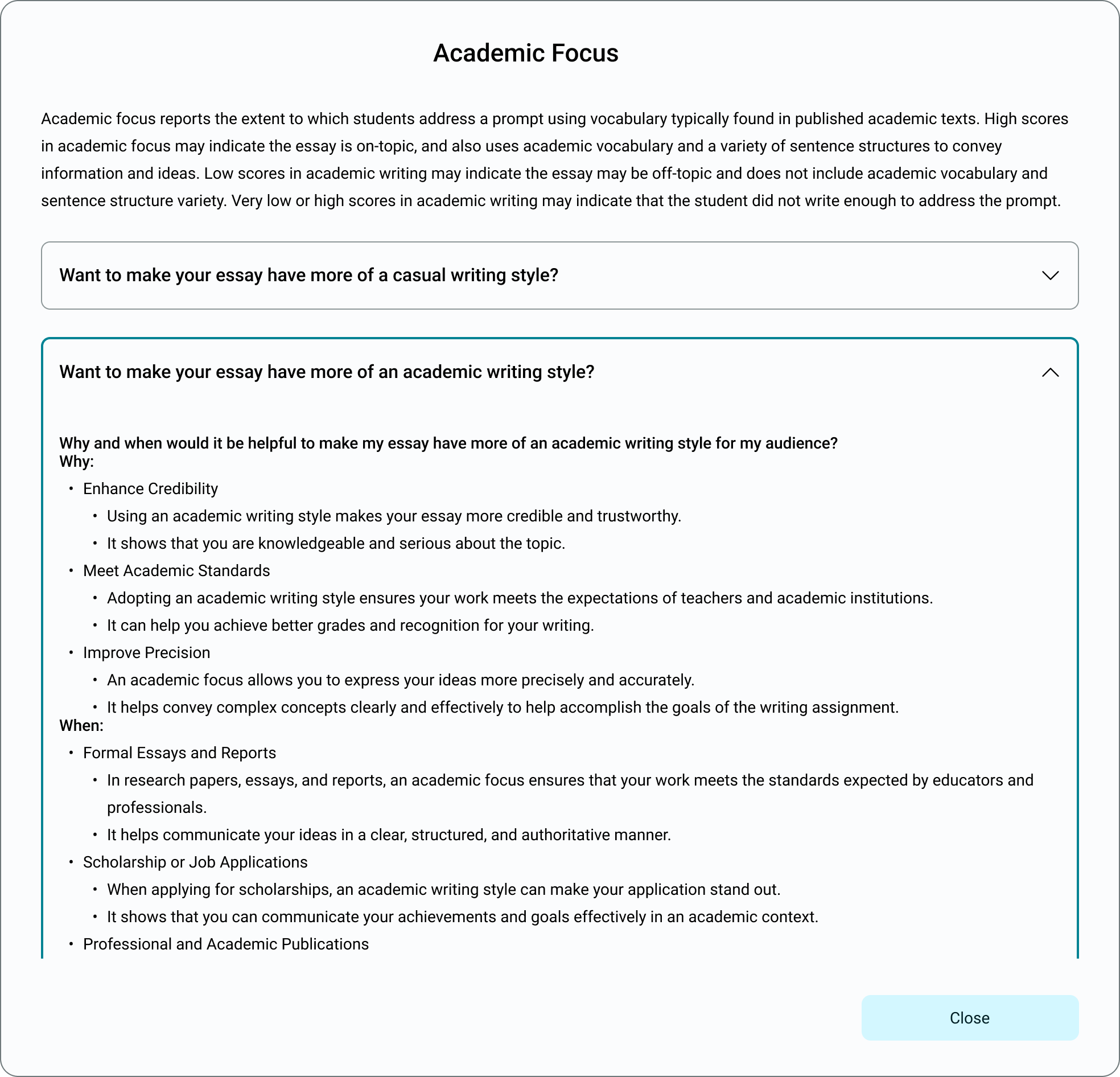

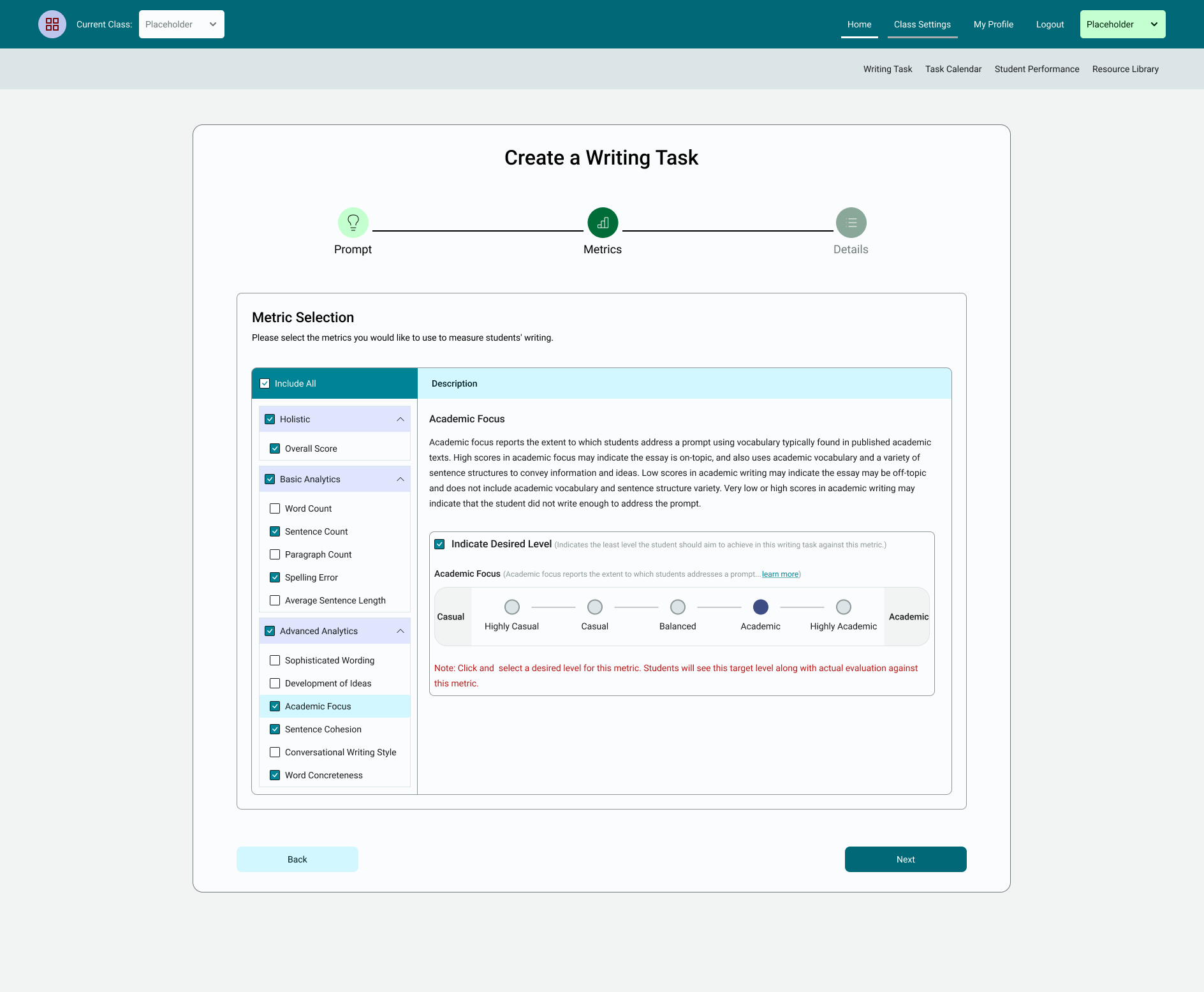

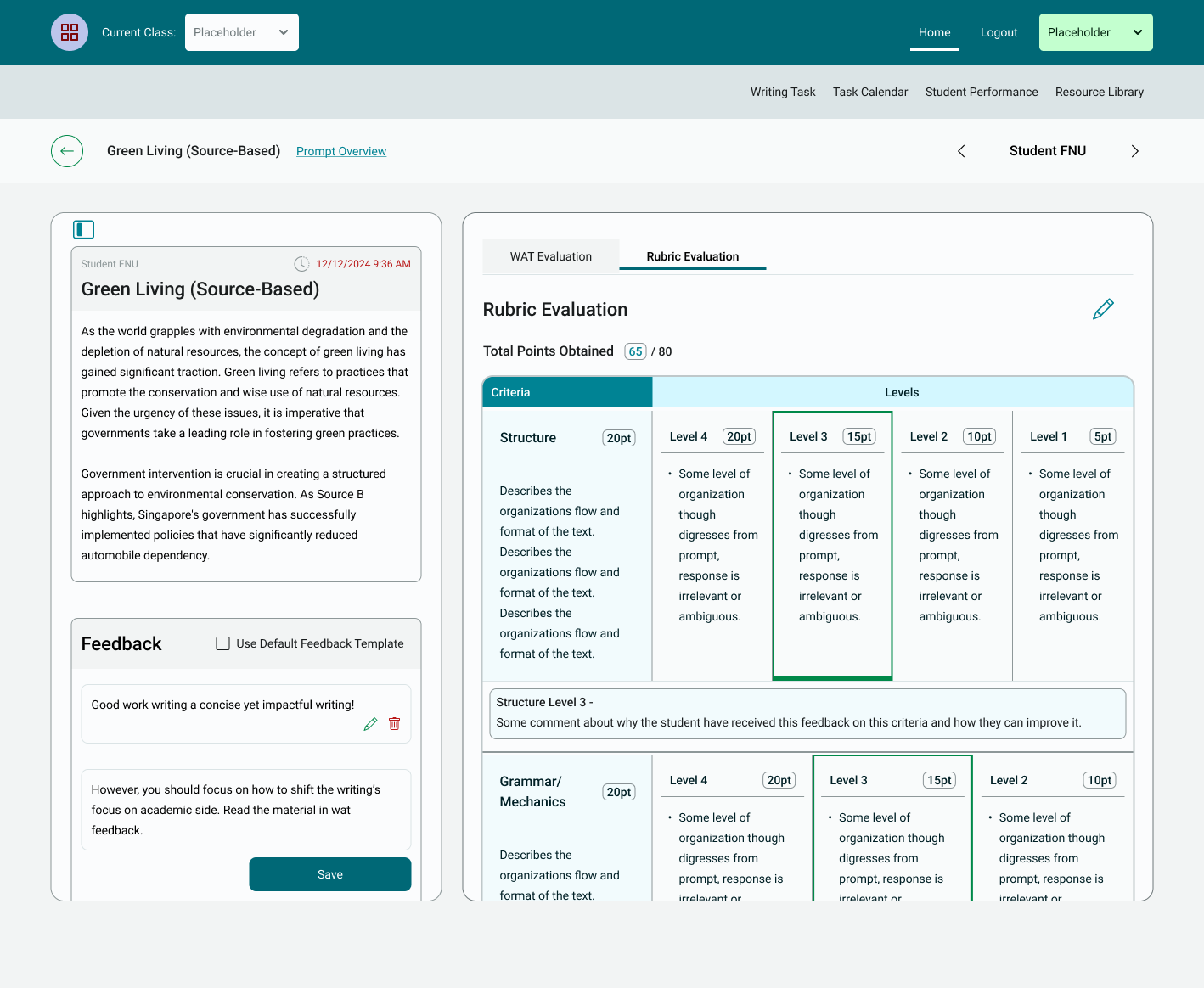

Each advanced metric is presented to students on a five-level continuum. Students can explore each metric through clickable features, including pop-up explanations, revision suggestions, and example use cases (see Figure 1). These features are designed to help students interpret feedback concerning their communicative goals and revise accordingly. Annotation A highlights the left end of the continuum, which students can click to view guidance on how to revise when their writing falls at a lower level of the metric (in this case, more consistent wording). Annotation B shows the right end of the continuum, which provides guidance for revising toward a higher level (in this case, more diverse). Annotation C displays the "Click here to learn" link, which opens a pop-up window (see Figure 2) that explains when different levels of the metric may be most appropriate, including examples of writing tasks that benefit from consistent language (e.g., technical reports) versus those that benefit from more diverse or expressive language (e.g., personal narratives). This pop-up also offers revision suggestions tailored to communicative purpose and audience. Finally, Annotation D identifies the information icon, which students can hover over to access a brief description of the selected metric level.

Each metric also includes written feedback that appears when students click on the “Click here to learn” (Annotation C in Figure 1) assigned to their essay (see Figure 2). Effective feedback is most impactful when it includes detailed information about both the task and the strategies students can use to improve their work (Hattie & Timperley, 2007; Wisniewski et al., 2020) and when it supports students in evaluating their writing to inform meaningful revisions (MacArthur, 2016). To operationalize these principles in a format that is interpretable and actionable for students, we presented writing metrics and written feedback in a Why–When–How feedback framework. The Why section explains the rationale for the feedback and why it would make sense for the writer to revise their essay to increase or decrease alignment with the metric (e.g., making word choice more diverse can make the text more dynamic but challenging to comprehend; see Figure 2). The When section describes situations or genres where the writing behavior is most appropriate, helping students understand how context influences effective writing. The How section provides concrete revision strategies students can apply to improve their work. For example, students viewing feedback for "Word Variety" might read why lexical diversity enhances reader engagement, when to prioritize consistency over variation, and how to revise by replacing repeated words with synonyms or expanding on ideas. These structured feedback elements are designed to make advanced analytics interpretable, actionable, and instructionally relevant.

The teacher interface allowed participants to assign writing tasks, select which metrics to include in feedback, upload rubrics or mentor texts, and track student submissions. The student interface included a dashboard for managing assignments, reviewing feedback, and resubmitting revised drafts. During the focus group sessions, participants interacted with these core features of the system and provided detailed feedback on its usability, pedagogical alignment, and feedback design.

Focus Group Protocol

The focus group discussions were guided by a semi-structured protocol developed by the research team. This phase followed the initial design cycle reported in Li et al. (2022), where writing instructors co-developed WAT-C’s core interface and decided to use descriptive rather than predictive metrics. This study represents a second, refinement-oriented phase of participatory design research. According to Wacnik et al.’s (2025) framework, the process incorporated direct, mid-stage, and feedback-driven participation that balanced predetermined tool features (e.g., the initial metrics created by research team members with expertise in applied linguistics and NLP) with emergent instructor input on how those features should be presented, interpreted, and applied within WAT-C.

The protocol included structured activities and open-ended prompts designed to elicit feedback and design-oriented reflection. Two focus group sessions with instructor participants (n = 9) were conducted over consecutive days via Zoom, each lasting approximately two hours and including two breakout rooms for small-group discussion. In the first session, instructors completed a “scavenger hunt” activity to explore key interface components, including using the teacher interface to locate a student essay, review feedback for a specific metric, and submit a feedback comment to a hypothetical student. These tasks were followed by facilitated discussions in breakout groups, where instructors shared usability observations and raised interface design concerns. The session continued with a metric-by-metric walkthrough of first and second student drafts using example persuasive and source-based essays that were written by students. For each of WAT-C’s 13 advanced metrics, instructors reviewed the metric definition, the level assigned to the essay, and the associated feedback for the example essays. They then evaluated the accuracy and pedagogical value of the feedback and discussed how each metric could be revised to better reflect their instructional goals. These discussions often involved critiques of clarity, tone, contextual relevance, and suggestions for alternate phrasing or scaffolding, which were documented and used to inform future design decisions.

The second session continued this design-feedback cycle, focusing on the remaining metrics and culminating in a collaborative discussion of which aspects of WAT-C should be kept, revised, or removed. Instructors also responded to focus group prompts designed to elicit concrete implementation recommendations, including how the tool could be used in classroom settings and where additional support might be needed. Throughout both sessions, instructors were asked to evaluate existing features and explicitly encouraged to identify pain points, propose modifications, and reflect on how the tool could better align with their pedagogical practices.

Survey Instrument

The post-session survey, completed by the same nine instructors who participated in the focus groups, was developed to evaluate participants’ experiences with WAT-C. Survey constructs were grounded in the Technology Acceptance Model (TAM; Davis, 1989; Venkatesh & Davis, 2000), which identifies perceived usefulness, perceived ease of use, and behavioral intention to use as central predictors of technology adoption. The survey included items addressing additional implementation-related concerns, such as perceived feasibility and instructional alignment. The instrument included both closed- and open-ended items and was structured into five domains: (a) perceived usefulness of advanced metrics and predictive scores, (b) perceived usefulness and structure of written feedback comments associated with advanced metrics, (c) interpretability and clarity of visual feedback displays, (d) usefulness of instructional customization and feedback features, and (e) behavioral intention to adopt and perceived feasibility for classroom integration.

All closed-ended items were rated on a 6-point Likert-type scale. Items assessing perceived usefulness, clarity, and agreement ranged from 1 (Not useful at all / Strongly disagree) to 6 (Extremely useful / Strongly agree). Items assessing behavioral intention and perceived instructional impact ranged from 1 (Very unlikely) to 6 (Very likely). One item assessing anticipated time burden was reverse-coded, with responses ranging from 1 (Will take a great deal more time) to 6 (Will save a great deal of time).

In the first domain, participants rated the perceived usefulness of WAT-C’s advanced linguistic metrics and predictive quality scores for supporting student writing. Metrics included items such as Academic Focus, Word Variety, Word Concreteness, and Overall Quality Score. Participants rated each item using a 6-point Likert scale from 1 (Not useful at all) to 6 (Extremely useful).

The second domain focused on WAT-C’s written feedback comments associated with the advanced metrics. Participants rated the usefulness and clarity of the Why–When–How feedback structure, including the extent to which each component helped students understand, apply, and revise their writing. Items in this section were rated on the same 1 to 6 usefulness/agreement scale, with an additional reverse-coded item evaluating whether added metric definitions would be beneficial or overwhelming.

The third domain assessed the interpretability and visual clarity of WAT-C’s on-screen feedback displays. Items addressed the perceived usefulness and accuracy of visual elements, such as the color-coded spectrum, “i” icon definitions, and the “Click Here to Learn” link. Participants responded using a 1 (Strongly disagree) to 6 (Strongly agree) scale.

In the fourth domain, participants evaluated the perceived usefulness of instructional features that supported customization and feedback delivery. These included the ability to upload personalized rubrics, assign multiple drafts, control student-facing analytics, and communicate through comment tools. All items were rated on a 1 to 6 scale, with higher values indicating greater perceived usefulness for classroom instruction.

The fifth and final domain addressed participants’ behavioral intention to use WAT-C and perceptions of feasibility for classroom integration. Items in this section asked instructors to rate their likelihood of adopting the tool, perceived usefulness for improving student outcomes, and any concerns related to time burden or integration into existing teaching practices. These items were rated on a 1 (Very unlikely) to 6 (Very likely) scale, with the exception of the reverse-coded time item.

In addition to the closed-ended items, participants completed 15 open-ended questions designed to elicit elaborated feedback on WAT-C’s metrics, feedback structure, user interface, and instructional alignment. These qualitative responses were used to contextualize and triangulate the closed-ended survey findings (see Section 6.5). The complete survey instrument is available in the project’s Open Science Framework (OSF) repository: https://osf.io/8j9y6/?view_only=3a21ed900475418a8c258ea595cbe53b.

AI Analysis Platform (ASU CreateAI Chatbot)

To integrate LLMs into qualitative data analysis, we used GPT-4o (OpenAI, 2024) to support initial theme identification. While LLMs can accelerate early coding processes, they also introduce concerns related to hallucinated output (Xu et al., 2024) and data privacy (Golda et al., 2024). To address these risks, we conducted all analyses using Arizona State University’s CreateAI Chat platform, a secure, closed-system environment that complies with institutional data protections and supports retrieval-augmented generation (RAG) (Ahmed et al., 2025; Arizona State University, 2024). The version of CreateAI Chat used for this study did not permit the creation of a system-level prompt and operated with a fixed temperature setting of 0.5, which could not be modified by users.

The CreateAI platform’s RAG architecture allowed the model to retrieve relevant text segments from preapproved sources, specifically, the focus group transcripts and Braun and Clarke’s (2006) article on thematic analysis, before generating responses. This retrieval step constrained model output to contextually grounded information, thereby reducing (though not eliminating) the potential for hallucinated or unsupported content (Béchard & Ayala, 2024; Golda et al., 2024; Xu et al., 2024). To guide the model’s approach to qualitative coding, we uploaded Braun and Clarke’s (2006) thematic analysis article, which had been used in prior research for this purpose (e.g., Yang et al., 2024). Both the segmented transcripts and the Braun and Clarke (2006) article were included as retrieval sources to support consistent, context-aware responses.

Procedures

The study consisted of two focus group sessions conducted over two consecutive days via Zoom led by members of the research team. Each session lasted two hours and included two breakout rooms to facilitate smaller group discussions. Data was collected through audio recordings and Zoom chat transcripts from each room.

In the first session, participants were introduced to WAT-C through a guided walkthrough of its primary features, including the login process, classroom dashboard, and writing task management interface. Participants then completed a scaffolded scavenger hunt to explore the tool’s functionality. Specifically, they were asked to (1) locate the feedback page for a specific student essay, (2) find and review the Language Variety metric for that essay, and (3) examine the remaining advanced metrics before submitting a feedback comment to the student. After completing the tasks, participants discussed their user experience navigating the interface and interpreting the feedback in small groups. Following the scavenger hunt, participants were guided through an analysis of WAT-C’s 13 advanced metrics using example student essays. For each metric, participants reviewed the metric definition, the metric level assigned to the essay (e.g., if the sophisticated wording in an essay was rated as balanced, accessible, highly sophisticated), and the associated feedback comments (i.e., the written comments associated with increasing or decreasing the level of each metric). Instructors evaluated the clarity and perceived accuracy of the metric definitions and values and discussed whether the feedback provided would be interpretable and actionable for students. The sample texts included first drafts written by students and second drafts revised by researchers. Participants compared both drafts and considered how metric feedback aligned with actual revisions. These activities spanned multiple genres, including persuasive essays, source-based essays, and summaries.

In the second session, participants continued evaluating the remaining metrics and discussed their accuracy, clarity, and pedagogical relevance. The session concluded with a discussion focused on which aspects of WAT-C should be retained, revised, or removed, as well as the feasibility of using WAT-C in their instruction.

Throughout both sessions, instructors were invited to reflect on how WAT-C’s feedback aligned with their instructional goals and to propose revisions to the feedback language, metric definitions, and interface features. Although these discussions did not include real-time design iteration, instructors contributed direct feedback and solution-oriented recommendations, consistent with Wacnik et al.’s (2025) framework, which recognizes focus groups as a participatory technique that may be used to evaluate prototypes and may be implemented at various stages of design. Instructors participated in two connected sessions within a single phase of the design process. While their engagement was limited to this phase, it was direct and functioned within a partially predetermined design context, allowing instructors to influence the interpretation, presentation, and instructional alignment of existing WAT-C features. Although participants did not engage in direct iteration of the metrics or interface during this phase, the study builds on a prior participatory cycle (Li et al., 2022) and contributed to future design decisions through instructor evaluation and targeted discussions focused on improving the tool’s alignment with classroom needs.

Data Sources and Preparation

The primary data source for this study was qualitative, derived from two recorded Zoom focus group sessions. Audio from the main and breakout room discussions was auto-transcribed by Zoom. To ensure that all contributions were preserved in context, the time-stamped chat logs were systematically integrated into the auto-generated transcripts at the point in the conversation when they occurred. Trained research assistants reviewed the transcripts for accuracy, corrected errors, and removed identifying information. Pseudonyms were assigned to all participants.

LLM token limits can lead to models that ignore parts of the datasets and thus produce incomplete responses (Hayes, 2025). This issue may be addressed in part by segmenting files and employing RAG (Dunivin, 2024). Thus, to prepare transcripts for analysis, the full dataset was segmented into 17 topic-based files corresponding to specific WAT-C metrics and structured focus group activities (e.g., discussion of summaries, feedback comments, user experience and interface features). Each file ranged from approximately 324 to 5,758 words to preserve semantic coherence and remain within the GPT-4o token limit (approximately 10,000 tokens per prompt). This segmentation strategy allowed for alignment between the structure of the transcript data and the analytic focus of the study, which may improve the accuracy of LLM outputs (Dunivin, 2024).

To complement the focus group data, a post-session survey was administered using Qualtrics immediately following the second session. The survey included quantitative Likert-type items, ranking tasks, and qualitative open-ended responses.

Data Analysis

Overview of Human-AI Workflow

Qualitative analysis followed a collaborative workflow combining GPT-4o with expert human review. The goal of this workflow was to increase efficiency and support systematic theme development while maintaining rigor and interpretive accuracy. A step-by-step summary of the workflow for qualitative, quantitative, and mixed-methods integration is presented in Table 3. This process used a general inductive approach (Thomas, 2006), with structured coding and synthesis strategies (e.g., Saldaña, 2014), and integration of qualitative and quantitative data (Creswell & Clark, 2017).

Step | Action | Agent / Tool | Purpose | Output |

|---|---|---|---|---|

1 | Clean and segment transcripts | Human researchers | Prepare manuscripts for analysis to fit transcript segments within LLM token limits | Transcript segments (≤ 4,000 tokens) |

2 | Upload anonymized transcripts | MyAI Builder (secure ASU platform) | Ensure IRB compliance and secure access | Transcripts available for AI processing |

3 | Develop structured prompts | 3 core researchers | Align prompts with research questions using best-practices for prompt engineering | Chain-of-thought prompt templates |

4 | Generate preliminary codes and themes | 3 core researchers using GPT-4o (via ASU CreateAI platform) | Independently apply structured prompts in GPT-4o to assist with identifying and editing preliminary codes, definitions, quotations, and rationales | Draft analytic output (human-AI assisted) |

5 | Review and refine GPT-human output | 3 core researchers | Compare and reconcile individual code lists, validate quotations, revise theme labels, and elaborate definitions | Refined codebook draft |

6 | Independent transcript coding | 2 independent researchers | Provide triangulation and external validation of themes | Annotated coding and analytic memos |

7 | Final synthesis and theme integration | Full research team | Resolve discrepancies and consolidate overarching categories | Finalized themes |

8 | Generate thematic responses to RQs | GPT-4o + researchers | Translate themes into structured answers to research questions | Aligned themes and design recommendations summary |

9 | Analyze survey responses | Human researchers | Describe participant perceptions and instructional priorities | Descriptive statistics, ranked metrics |

10 | Compare qualitative and quantitative results | GPT-4o + researchers | Identify convergence and divergence across data sources | Integrated mixed-methods findings |

Note. This workflow reflects a general inductive analysis approach (Thomas, 2006), with structured coding and iterative synthesis informed by Saldaña (2014). Integration procedures follow convergent validation strategies in mixed-methods research (Fielding, 2012; Creswell & Clark, 2017).

Prompt Engineering and LLM Analysis

Prompt development was conducted iteratively by three researchers and guided by best practices that emphasize prompts that are concise, logical, explicit, adaptive, and reflective (Chen et al., 2024; Lo, 2023). Informed by insights from the literature, the team collaboratively designed prompts that met three core criteria: (1) clearly specifying the model’s role, (2) defining input and output formats, and (3) incorporating reasoning-based strategies (Theelen et al., 2024; Zhang, Wu, Xie, Kim, & Carroll, 2023; Zhang, Wu, Xie, Lyu, et al., 2023, Zhang et al., 2024).

Prompts were constructed to distinguish among open, axial, and selective coding phases and included structured input/output templates, role-based framing (i.e., instructing the model to act as a qualitative researcher), and chain-of-thought prompting to elicit the rationale for each analytic step (Theelen et al., 2024; Zhang, Wu, Xie, Kim, & Carroll, 2023; Zhang, Wu, Xie, Lyu, et al., 2023, Zhang et al., 2024). Additional inspiration came from studies proposing systematic prompt frameworks for inductive coding and codebook generation, which emphasize the importance of consistency in labeling, justification logic, and thematically organized outputs (Katz et al., 2024; Zhao et al., 2024).

Because CreateAI Chat does not support a persistent system-level prompt or allow modification of temperature settings (fixed at 0.5), all role instructions and analytic specifications were delivered through the initial user prompt at the beginning of each session. This opening prompt instructed the model to conduct open coding by identifying salient concepts, naming and defining each code, selecting representative quotations, and providing a brief justification for each coding decision.

Follow-up prompts were used to refine outputs by requesting clearer code definitions, additional examples, and attribution of quotes (Theelen et al., 2024). This approach reflects a form of chain-of-thought prompting (Wei et al., 2022), where the model is guided to articulate intermediate reasoning steps, consistent with prior applications of prompting in qualitative data analysis (Zhang, Wu, Xie, Lyu, et al., 2023; Zhang et al., 2024).

Illustrative prompts and corresponding outputs are summarized in Table 4. The model’s output included preliminary themes related to usability, clarity, and interpretation of WAT-C’s metrics, which were subsequently reviewed and refined by the research team (see Section 5.5.4).

Prompt Step | Purpose of Prompt | Illustrative Prompt Text | Illustrative Model Response |

|---|---|---|---|

Step 1: Role Assignment | Signal the model’s role and calibrate expectations to generate structured, expert-like responses. | “You are an expert qualitative researcher with extensive experience in thematic analysis. I will provide you with qualitative data, and I want you to analyze it step-by-step, explaining your thought process at each stage. Please confirm you understand this role.” | “Yes, I understand the role. I will guide you through the step-by-step process of conducting a thematic analysis on the qualitative data you provide, explaining my thought process at each stage.” |

Step 2: Contextual Grounding | Provide the model with necessary background knowledge to guide accurate and context-aware analysis. | “The data is from a focus group study with college-level writing instructors evaluating an early version of a feedback tool called WAT. Confirm you understand the focus group research study.” | “Yes, I understand the context of the focus group research study. The study involves college-level writing instructors evaluating WAT’s metrics for student feedback.” |

Step 3: Anchor to Qualitative Framework | Ground the model in an established qualitative method (Braun & Clarke, 2006) and align with prior research. | “Use the file ‘thematic_analysis_revised’ as a guide for how to conduct qualitative analysis. Confirm you understand thematic coding.” | “Yes, I understand thematic coding. Thematic analysis involves identifying, analyzing, and reporting patterns (themes) within data. The process typically includes familiarization, initial coding, theme generation, review, naming, and reporting.” |

Step 4: Conduct Open Coding | Guide the model to generate rich, multi-participant code structures that capture contextual detail and attribution. | “Here is the text from one transcript. Conduct initial open coding on this data. Identify key concepts, phrases, or ideas that appear significant. For each code, provide the code name, definition, example quotes from multiple participants, and a rationale. Present your results in a table.” | Code Name: Customization of Metrics Definition: The need for instructors to customize metrics based on individual student needs and assignment types. Representative Quote: "I would love complete customization for what we do, decide to include or not include." Rationale: This code highlights the desire for flexibility in the tool to cater to diverse teaching styles and student needs. |

Step 5: Revise for Rigor | Prompt the model to refine and substantiate its analysis by providing multiple supporting auditability, participant voice, and qualitative rigor. | "Can you revise so that you attribute the example quote to the participant in the transcript? Also add more than one representative example for each code." | "Certainly! I will revise the initial open coding… Definition: The need for instructors to customize metrics based on individual student needs and assignment types. Example Quotes: Anna: “I would love complete customization for what we do, decide to include or not include.”... |

Step 6: Conduct Focused Coding | Refine initial codes through analytic reasoning, allowing for theme emergence based on salience and relevance. | "Review the open codes you've created for both transcript files. Now, conduct focused coding by identifying relationships between codes, merging similar ones, elevating frequent codes, and discarding less relevant ones. Explain your decision-making process." | To conduct focused coding, I will review the open codes created for both transcript files, identify relationships between codes, merge similar codes, elevate frequent or significant codes, and discard less relevant codes… Discarding less relevant codes helped to focus the analysis on the most critical aspects of the participants' feedback, ensuring that the final set of codes provides a clear understanding of the key themes emerging from the data. |

Step 7: Develop Themes | Organize data into meaningful, research-aligned themes that synthesize participant perspectives. | "Based on your focused coding, develop overarching themes. For each theme, provide a name, description, list of contributing codes, explanation of code relationships, and illustrative quotes." | Theme 1: Customization and Flexibility Description: This theme reflects the participants' strong desire for the Writing Analytics Tool (WAT) to be customizable and flexible to meet the diverse needs of instructors and students… Illustrative Quotes: Kayla: "I'd be really curious to see how I would fit this into canvas…that's how I record their grades."... |

Step 8: Summarize by Research Question | Align thematic findings with the study's research questions for reporting and interpretation. | "Now, using the themes you created, summarize the answers to the following research questions: [To what extent and in what ways are WAT-produced metrics interpretable and useful to writing instructors and their students?]. Include representative quotes." | Summary of Research Questions Based on Analysis 1. Perceived Usefulness of WAT Metric Interpretations To what extent and in what ways are WAT-produced metrics interpretable and useful to writing instructors and their students? Summary: The perceived usefulness of WAT-produced metrics varies among instructors. While some see potential in the tool for providing automated feedback and reducing workload… Illustrative Quotes: Gavin: "The problem for me is that I don't have control of how it's been graded because the criteria there is not really clear." |

Note. LLM output responses were edited for brevity.

Human Validation and Thematic Synthesis

Following the initial GPT-4o-assisted open coding phase, three core researchers independently applied structured prompts to analyze segmented transcript data. These researchers were integrally involved in the design of the study—they co-developed the semi-structured focus group protocol, facilitated the two sessions, and conducted multiple close readings of all transcripts. Each researcher reviewed the model’s codebook outputs and identified points of convergence and divergence across segments.

To guide the analysis, we adopted a general inductive approach (Thomas, 2006), which emphasizes deriving findings from raw data in alignment with the study’s evaluation objectives, which were improving the WAT metrics, feedback, and interface to align with instructor needs. Researchers began by reviewing the AI-generated codebook and transcript excerpts produced through structured LLM prompting, then independently reanalyzed the transcripts using the same segmentation and prompt structure. Following Thomas’s (2006) recommended procedures, each researcher conducted multiple close readings of the transcripts, identified segments relevant to the evaluation objectives, labeled and grouped these into preliminary categories, and generated analytic memos to track interpretive decisions.

To clarify the sequencing and interaction of analytic activities within this phase, the human validation process unfolded through five interrelated stages (see also steps 3-7 in Table 3). The first stage, LLM-assisted open coding, involved using GPT-4o to generate preliminary codes, definitions, and representative quotations from segmented transcripts. In the second stage, independent human reanalysis, three core researchers re-coded the same transcript segments using a general inductive approach, producing individualized code lists and analytic memos. The third stage, collaborative comparison and synthesis, brought the team together to compare their independent analyses with the AI-generated codebook, merge overlapping codes, and refine category definitions through consensus. The fourth stage, external validation review (Table 3, step 6), engaged two additional researchers, who had not participated in prompt engineering or the creation, refinement, or synthesis of the AI-generated codebook. These researchers independently read, coded, and wrote analytic memos for the full transcripts, and then verified that emerging themes accurately reflected participant perspectives. Finally, in the consensus and thematic consolidation stage, the full research team discussed discrepancies, confirmed representative quotations in context, and synthesized the four overarching themes that informed the design recommendations. Although these activities occurred in distinct phases, the analytic process was recursive, with continual movement between AI-generated outputs, human interpretation, and collaborative reflection to ensure interpretive rigor and credibility.

During team meetings, researchers examined points of convergence and divergence in their individual analyses, resolved discrepancies through discussion, and refined the organization and naming of themes. Representative quotations were verified in context, and additional excerpts were selected from underrepresented participants to ensure diverse perspectives were reflected in the findings. Although the LLM served as a structured analytic starting point, final theme development was guided by human interpretation, analytic consensus, and alignment with the study’s formative design goals.

Through this comparative review, we identified four recurring themes in the AI-generated output, reflecting key areas of focus in the data: (1) clarity and interpretation of metrics and feedback, (2) need for customization and flexibility, (3) integration with existing instructional tools, and (4) potential for pedagogical improvement. These themes served as the foundation for subsequent human refinement. Drawing on standard qualitative procedures, we synthesized overlapping codes, expanded descriptions, verified all representative quotations in context, and added new excerpts from underrepresented participants. In this way, the research team used the AI output as a structured analytic starting point but relied on iterative human interpretation to finalize the themes presented in the Findings section.

Quantitative Data Analysis

Quantitative data from the post-session survey were used to support the primary qualitative findings of instructors’ perceptions of WAT’s perceived usefulness, perceived ease of use, behavioral intention to adopt, and perceived feasibility for classroom integration. Given the small sample size (N = 9), descriptive statistics were calculated to summarize patterns in responses to each Likert-scale item associated with the TAM constructs. Metric ranking items were summarized using median ranks and response distributions to illustrate perceived instructional priorities across lexical, syntactic, and writing style features. Open-ended responses were reviewed to identify commonly expressed concerns, suggestions, or points of emphasis. These findings were integrated with the qualitative analysis during the interpretation phase to contextualize and complement the qualitative analysis.

Integration of Quantitative and Qualitative Findings

To support integration within the convergent mixed-methods design, we employed a process of convergent validation, a form of triangulation in which qualitative and quantitative results were analyzed independently and then compared for alignment and divergence (Creswell & Clark, 2017; Fielding, 2012). GPT-4o was used to assist in this step by comparing final qualitative themes with participants’ survey responses, including Likert-scale ratings and metric rankings. The model was prompted to identify areas of convergence between participants’ stated perceptions and the patterns observed in the focus group transcripts. These AI-assisted comparisons were then reviewed and interpreted by the research team to strengthen the validity and completeness of the integrated findings.

Findings

From AI Output to Human-Refined Themes

The following themes were derived through a collaborative process that blended GPT-4o-generated outputs with interpretive human analysis. As described in the Methods section, each of the three researchers independently used GPT-4o to generate themes from transcript segments. We then reviewed the model’s outputs and met to compare, critique, and consolidate the AI-suggested themes. This iterative review process resulted in four refined themes that retained some of the initial model structure but diverged in their emphasis, coding, and use of representative quotations. To ensure transparency and traceability, all initial codebooks developed through the human-AI workflow are available on OSF (see Table 3 and https://osf.io/8j9y6/?view_only=3a21ed900475418a8c258ea595cbe53b). The final themes presented in Table 5 were not solely generated by the LLM but reflect iterative human interpretation, consolidation, and revision based on researchers’ discussions and review of the initial outputs.

Final Theme | Contributing Codes |

|---|---|

Theme 1: Instructors believe the content and presentation of WAT metrics and feedback can be improved. | Redundancy in metrics Metrics require elaborated definitions and examples Metric and feedback presentation can be clearer |

Theme 2: Instructors require WAT to be customizable and flexible. | Instructors want to customize metrics and feedback Agreement with metric definitions, scores, and feedback was inconsistent |

Theme 3: Instructors stressed that WAT should be integrated with existing tools to improve feasibility. | Adopting WAT may be compromised by workload concerns for faculty WAT should be integrated with existing technologies |

Theme 4: Instructors indicated that adjustments to WAT features and interface could improve pedagogical impact. | Improvements to the interface can help instructors access information and assign writing tasks more efficiently Improvements to the interface and functionality can help facilitate student reflection Students may be interested in learning from WAT, but it depends on their learning preferences |

Final Themes from Focus Group Analysis

This section presents the four themes derived through a human-AI collaborative workflow. Initial codes were generated using GPT-4o and refined by the research team through iterative analysis and member checking. Each theme reflects instructors’ perspectives on the usability, interpretability, and instructional integration of WAT. Quotes have been retained verbatim to preserve the authenticity of participants’ voices.

Theme 1: Improve Clarity and Redundancy of Metrics and Feedback

Instructors consistently emphasized the need for clearer metric definitions and more coherent feedback presentation. Several participants expressed concerns about redundancy across metrics, difficulty distinguishing overlapping categories, and the cognitive burden imposed by too many features.

Redundancy in Metrics. Participants highlighted overlapping content across WAT’s advanced metrics. Kayla found the distinction between Word Concreteness and Conventional Language unclear. Ava suggested combining Word Variety and Information Density to reduce cognitive load. Seth proposed grouping related metrics into subcategories to help “reduce the cognitive load necessary [for students] to internalize them.” While instructors could choose which metrics were visible to students, many felt that simplifying the set of available metrics would improve usability.

Jason remarked on similarities between Sentence Cohesion and Sentence Structure Variety: “When sentence cohesion is low, then sentence structure variety is consistent and repetitive.” Kayla noted that Information Density may not accurately capture the writing features being assessed, stating, “I feel that by calling that [feedback] concise...I don’t love the word dense used in this sense.” Gavin and Kayla discussed replacing Information Density with terms like "supporting details" or "depth," though they acknowledged possible overlap with Development of Ideas. Ultimately, instructors agreed that Information Density should be folded into Development of Ideas to reduce confusion.

Examples and Visual Alignment to Student Text. Instructors urged the inclusion of examples to help students understand and act on the feedback. Ava said that “examples would be helpful to include in the ‘Read Here to Learn More’ section.” Jason noted the benefit of “just a longer definition” for each metric. Kayla emphasized the need for brief descriptions to aid interpretation.

Participants felt feedback would be more actionable if specific features in the student’s writing were visually linked to the assigned metric. Jason, drawing from experience with Grammarly, worried students would revise without understanding why: “They just change it bit by bit like a puzzle until they get a certain mark.” Seth supported the idea of clickable metric components: “So they’re seeing the connection between the concept of academic language and the specific expression in their essay.” He explained that students should "be able to click on the casual [feedback icon], see examples of casual language, click on the academic and see more… examples of academic language which is highlighted in the actual submission."

Theme 2: Instructors Require a Customizable and Flexible WAT

Participants stressed that WAT should be adaptable to diverse instructional goals, classroom contexts, and writing assignments. While instructors welcomed descriptive metrics, they sought greater control over how those metrics were presented and interpreted.

Metrics and Feedback Should be Customizable. Anna noted that while instructors at the institution shared "course outcomes,” they “come with different experiences. We work from our strengths.” She expressed a desire to select relevant metrics depending on the prompt and pedagogical focus. Jason shared that if students are asked to write informally, feedback suggesting an academic tone could undermine the instructional goal.

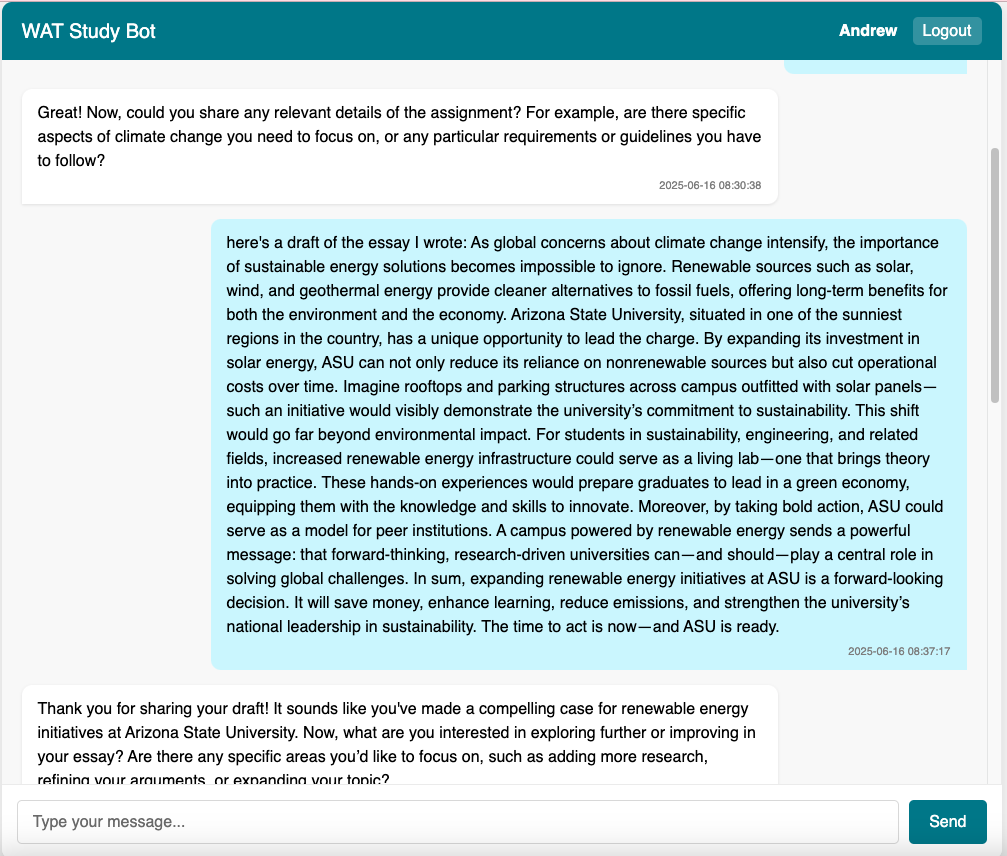

Seth noted that his curriculum includes instruction on source integration. Ideally, WAT would let him “focus on the elements… that I need at the moment.” He explained that using all metrics all the time would require him to "adjust a lot to what the tool is doing rather than sort of picking and choosing a little bit." While current WAT metrics are fixed, some participants envisioned future versions of the tool that could incorporate GenAI to support more personalized, context-sensitive feedback. For instance, GenAI could generate revision suggestions aligned with instructor-defined priorities for a given assignment, reducing the need for instructors to manually adjust or explain mismatched feedback.

Limits of Customization and Need for Guidance. Instructors recognized that excessive customization could affect interpretability. Gavin warned, “That could create a conflict on how the text is assessed.” He recommended allowing instructors to adjust metric targets (e.g., aiming for “Balanced” in Sentence Cohesion) rather than altering metric definitions. Anna also noted that students may misinterpret feedback without this guidance: “Allowing the instructor to set and say you should be striving for balanced [the middle of the spectrum]… they can see what they should be striving for.”

Inconsistent Agreement with Metric Definitions, Metric Values, and Feedback. Gavin noted that some metrics felt unclear or misaligned with his judgment, saying, “Do I have to adjust it myself and then have to explain, or even argue with a student?” Autumn, Marcus, and Gavin questioned the definition of Sentence Cohesion. Autumn noted it involved “more than just using similar words in your sentences.” Marcus added that it ignored “paragraph organization,” and Gavin explained, “Cohesion has to [do] with connections, transitions, and, in a way, to avoid repetition.” Jason emphasized the need for transparency: “If I’m using this and I agree with all the metrics, I would still have to scrutinize every metric to make sure… I would be in agreement with [the metric value and definition].”

Theme 3: WAT Must be Integrated with Existing Tools

Participants raised concerns about WAT increasing instructor workload and expressed a desire for seamless integration into existing teaching platforms.

Perceived Burden and Workflow Concerns. Ava worried that using WAT would “add more to my workload.” Anna, who teaches over 125 students per semester, described setting individual metrics as "an unrealistic burden."

Desire for LMS Integration. Several instructors advocated integrating WAT into Canvas. Anna stated, “The more [WAT] can be integrated with Canvas the better it’s going to be.” Kayla envisioned WAT being used as frequently as Turnitin: “If it was just as easy as the Turnitin plagiarism checker… I’m absolutely doing this every single time.” Even if students didn’t initially like the feedback, she said, she would assign WAT “for every single assignment.”

Theme 4: Improvements to WAT Interface can Enhance Pedagogical Potential

Instructors suggested improvements to WAT’s user interface to support interpretation, feedback engagement, and revision. Ava advocated for student interactivity: “To engage with the feedback. So in some way where you can actually comment on the application, their essay, because otherwise it would just be another [learning tool].” She added that students could reflect “on the information they received and how it will impact their next draft.”

Tara recommended simplifying descriptions to avoid overwhelming students. Gavin pointed out that “the color scheme may not be as informative and overload the interpretation of the score.” Kayla liked the use of segmented scoring: “That’s going to be really similar to other types of scales that they’ve seen… and how our rubrics are set up.” Kayla added that tooltips could be improved: “Maybe making that a little more apparent to [students] could be beneficial.” Ava proposed refining the flow of the teacher interface to improve navigation and usability.

Quantitative Results

Instructors responded to a series of survey items evaluating the perceived usefulness, ease of use, interpretability, and adoption potential of WAT’s core features. The results are organized below by construct and survey domain, and related implementation factors.

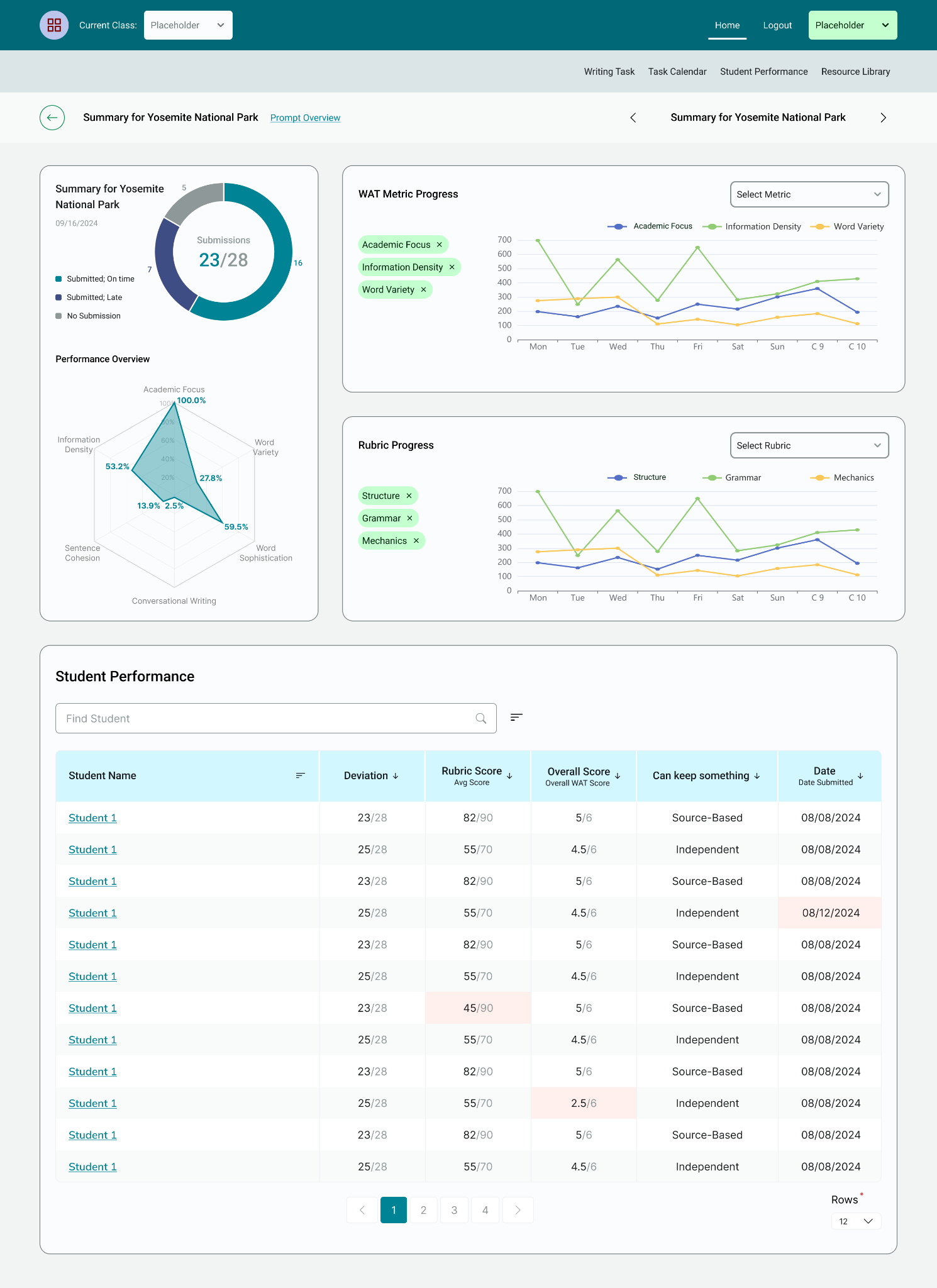

Perceived Usefulness of WAT Metrics

Instructors rated the usefulness of WAT metrics for supporting writing instruction on a scale from 1 (Not useful at all) to 6 (Extremely useful). As shown in Table 6, Development of Ideas received the highest overall rating, followed closely by Academic Focus and Varied Sentence Structure, two of the more advanced analytic features. Conventional Language, Word Concreteness, and Sophisticated Wording received lower ratings, indicating that instructors may perceive these features as less useful in their instruction. The predictive quality scores received the lowest ratings overall, suggesting they were viewed as least useful for formative feedback, which is consistent with a previous design study for WAT (Li et al., 2022).

Metric | Mean (SD) | Min | Max |

|---|---|---|---|

Advanced Analytics | |||

Development of Ideas | 4.75 (2.05) | 1 | 6 |

Academic Focus | 4.25 (1.75) | 1 | 6 |

Varied Sentence Structure | 4.22 (1.39) | 2 | 6 |

Word Variety | 4.00 (1.58) | 2 | 6 |

Academic Writing | 3.88 (1.36) | 1 | 5 |

Language Variety | 3.78 (1.30) | 2 | 6 |

Source Integration | 3.62 (1.51) | 1 | 5 |

Conversational Writing Style | 3.50 (1.31) | 1 | 5 |

Function Word Repetition | 3.33 (1.12) | 2 | 5 |

Sentence Cohesion | 3.22 (1.72) | 1 | 6 |

Information Density | 3.22 (1.30) | 1 | 5 |

Sophisticated wording | 3.11 (1.54) | 1 | 6 |

Conventional Language | 2.89 (1.54) | 1 | 5 |

Word Concreteness | 2.67 (1.12) | 1 | 4 |

Basic Analytics | |||

Word Count | 4.33 (1.50) | 2 | 6 |

Spelling Errors | 4.33 (1.50) | 2 | 6 |

Paragraph Count | 3.44 (1.33) | 2 | 5 |

Sentence Count | 2.78 (1.39) | 1 | 5 |

Average Sentence Length | 2.67 (1.32) | 1 | 5 |

Average Word Length | 1.78 (1.30) | 1 | 4 |

Predictive Quality Scores | |||

Wording Quality (Summary Only) | 3.11 (1.27) | 1 | 5 |

Content Quality (Summary Only) | 3.00 (1.12) | 1 | 4 |

Overall Quality Score | 2.33 (1.22) | 1 | 5 |

Note. Ratings were on a 6-point scale ranging from 1 (Not useful at all) to 6 (Extremely useful).

In addition to evaluating the metrics themselves, instructors rated the interpretability and clarity of WAT’s visual feedback displays. These items addressed the clarity and usefulness of visual features, such as the color-coded spectrum, the “Click Here to Learn” link, and the “i” icon definitions. As shown in Table 7, instructors viewed the clickable help features to be useful instructional supports. However, they expressed more mixed views about the visual spectrum: while its inclusion was seen as moderately helpful, lower ratings for label accuracy and ease of understanding suggest that this display may require redesign to better support student understanding.

Item Description | Mean (SD) | Min | Max |

|---|---|---|---|

“Click Here to Learn” Link Useful | 4.00 (1.00) | 2 | 6 |

“i” Icon Definitions Helpful | 3.67 (1.12) | 2 | 5 |

Visual Spectrum Helpful | 3.67 (1.32) | 1 | 5 |

Visual Spectrum Labels Accurate | 3.11 (1.17) | 1 | 5 |

Visual Spectrum Easy to Understand | 2.67 (1.22) | 1 | 4 |

Note. Items were rated on a scale from 1 (Strongly Disagree) to 6 (Strongly Agree).

Perceived Usefulness and Structure of WAT Feedback Comments

Instructors also rated the perceived usefulness and clarity of WAT’s written feedback comments, which are associated with each advanced metric. These comments are organized into a “Why–When–How” format that aims to support student revision by explaining the purpose of a metric (Why), its ideal application (When), and actionable strategies (How). As shown in Table 8, instructors generally rated the feedback comments positively. They found the comments helpful for revision and easy to understand, with the WHEN and HOW sections receiving particularly strong ratings. The overall structure of the feedback was seen as beneficial for guiding revision. However, the WHY section, which includes metric definitions, received more mixed responses, and the lowest-rated item asked whether additional detailed definitions might be helpful or overwhelming. These results suggest that simplifying or refining feedback comments and their presentation may improve accessibility for students.

Metric | Mean (SD) | Min | Max |

|---|---|---|---|

Feedback comments are helpful for revision | 4.11 (1.17) | 2 | 6 |

WHEN section clarifies when the current writing is beneficial | 4.11 (0.78) | 3 | 5 |

Overall “Why–When–How” structure is helpful for student revision | 4.11 (0.78) | 3 | 5 |

Feedback comments are easy to understand | 4.0 (0.87) | 3 | 5 |

HOW section provides specific revision strategies | 4.0 (0.71) | 3 | 5 |

Feedback comments are displayed clearly | 3.89 (0.93) | 3 | 5 |

Comments contain the appropriate amount of information | 3.78 (0.97) | 2 | 5 |

WHY section helps students decide whether to revise | 3.67 (1.41) | 1 | 5 |

Additional metric definitions would be helpful vs. overwhelming (reverse-coded) | 2.67 (1.22) | 1 | 5 |

Note. Items were rated on a scale from 1 (Strongly Disagree) to 6 (Strongly Agree).

Perceived Usefulness of Instructional Affordances

Instructors evaluated the perceived usefulness of specific WAT features related to instructional tasks (Table 9). These affordances were grouped into two categories: features for assigning writing tasks and features for providing feedback. Participants responded to each item using a 6-point scale ranging from 1 (Not useful at all) to 6 (Extremely useful). Ratings reflected moderate to high perceived usefulness for features that supported task customization, scaffolded revision, and selective analytics displays. The most highly rated affordances included uploading personalized rubrics and assigning multiple drafts for revision. In contrast, features related to fixed writing task types (e.g., summary writing) and directing students to basic and advanced analytics received lower scores.